5 years ago Samsung introduced the Galaxy S4. It had a big 5 inch Full HD display, an Octa-core processor and had double the ram. The other place where it got a big upgrade was the camera. The main camera was upgraded to 13MP from 8MP of Galaxy S3. It was a time when all the talk was about pixels.

In subsequent years, manufacturers made ambitious strides with 16MP, 20MP and even 41MP sensors. OEMs were facing a constraint at the start of the decade. They knew the fundamentals of good cameras like large aperture, bigger pixels, and bigger sensors. The problem was the size. Bigger sensors take up more space and trend was to make the phones thinner and lighter. So OEMs compromised in the camera department for appealing looks and ergonomics. Their hypothesis was proved true when one of the most popular complains about the Lumia 1020 (the 41MP beast) was the size, the appeal, the camera hump. And so followed the dark years of mobile photography where the innovation was absent, and the change was iterative.

It was in late 2016 when something happened. Apple introduced the iPhone 7 Plus with dual cameras. There were 2 main breakthroughs. First, it introduced optical zoom, so no more cropping in for zoom. The second, you could snap portraits with a convincing “Bokeh”.

However, a bigger leap in mobile photography was seen when Google introduced Pixel and it changed the way people thought about mobile cameras. The google pixel had the same Sony IMX378 found on many phones like the HTC U Ultra and Blackberry Keyone. What Google did differently was they used software to enhance their output. The idea was to focus more on software than on hardware. Using smart HDR Google would take multiple low exposure shots and then combine them to produce the final image.

The results were astounding. The results from Pixel were not iteratively better, they were generationally better and so we entered the realm of computational photography. Since then, with every new version of Pixel, Google has done wonders. And while the hardware has improved, it’s the software that always steals the spotlight.

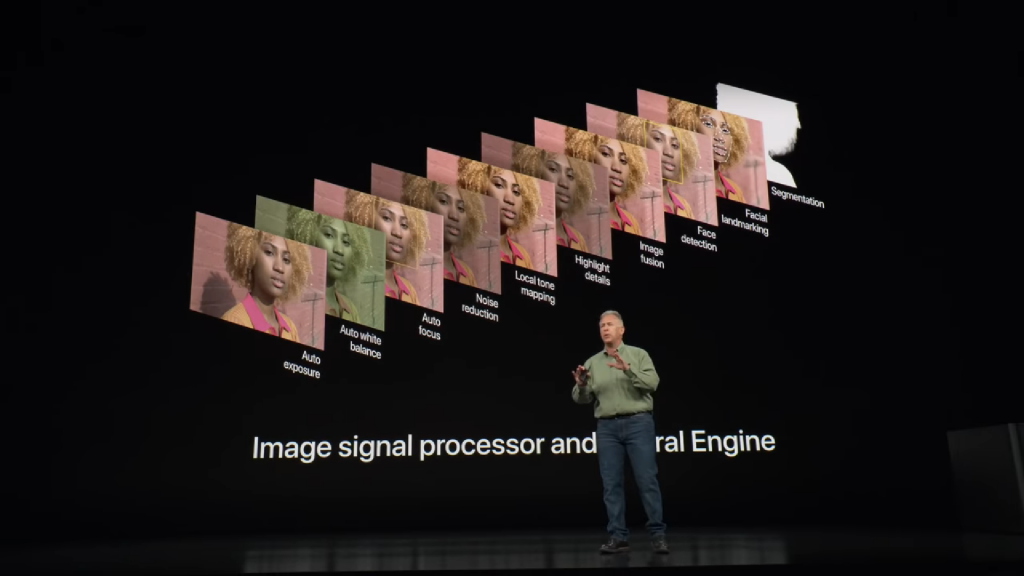

2018 was a big year for mobile photography as this every major OEM adopted computational photography of some sorts. OEMs introduced some interesting things like variable aperture, scene detection, 4 cameras, and even 40 MP camera without a camera bump. The biggest changes came with iPhone’s new smart HDR and yes the Google Pixel 3. iPhone Xs with its smart HDR uses advanced software algorithms to produce images with a very wide dynamic range. The Pixel 3 was far more impressive here.

Apart from the iterative improvements, the pixel 3 introduced 2 new things, super res zoom and night sight. Super res zoom is fancy software trick that allows Pixel 3 to zoom in digitally without losing as much detail as you would with conventional cropping based zooming. Yes, it is not as good as optical zooming, but it is interesting to see how comparable results were achieved. Night sight was something that honestly made me smile. I don’t know what sort of magic Google is doing here but the night sight on the pixel 3 makes low light shot gorgeous.

We wanted to see how good the software really is. So we took a OnePlus 6 and installed the pixel 3 camera app. A thing to note here is that this is not the official app and the app is still in beta, but the results are still going to blow you away.

The images on the left were taken with the stock camera app while the images on the right were taken with the Pixel 3 camera app (comparisons are best viewed using a desktop browser). What you will see is that the images on the right are sharper, have better colors, better contrast, lower noise and are generally more pleasing. This was a software port in beta for the OnePlus 6, that is something that makes it even more amazing.

So the future, what is it going to be about? Well, there is no doubt that camera hardware will continue to play an important role. OEMs will try to pack in bigger sensors, have wider apertures or even have more cameras as they enable things like wide angle shots and better portraits. However, the emphasis will start to shift more on the software side. 2019 will be about using AI-based software to produce better shots by combing multiple photos from multiple cameras. The reliance on software will start to increase more as the phones get more powerful processors and more sophisticated algorithms are developed. 2019 is shaping up to be an exciting year for mobile photography and we can’t wait to see what is coming next

PTCL is injecting 4 Billion Rupees in U Microfinance Bank to help it grow in Pakistan

PTCL is injecting 4 Billion Rupees in U Microfinance Bank to help it grow in Pakistan