Meta is now facing a formal regulatory investigation in the United Kingdom and a lawsuit in the United States over how it handles footage captured through its Ray-Ban AI smart glasses, following the publication of a Swedish investigative report revealing that contracted workers in Kenya routinely reviewed deeply personal content recorded through the devices.

The investigations mark a significant escalation in scrutiny of a product that Meta has positioned as central to its long-term hardware ambitions, and arrive at a moment when the company is already engaged in a separate court trial over allegations that it prioritised growth over the safety of teenage users.

The immediate cause was a report by Swedish publication Svenska Dagbladet, which found that Meta contractors based in Nairobi were labelling and categorising footage captured through the AI glasses for data training purposes. That footage, according to the report, frequently included bathroom visits, sexual activity, and other intimate moments recorded by wearers who were apparently unaware that their interactions with the AI assistant would result in human review of the content. Screenshots of credit card and bank account details also appeared in reviewed footage.

The UK’s Information Commissioner’s Office has launched an official investigation into the claims. In the United States, a lawsuit has been filed alleging that Meta violated privacy laws and engaged in false advertising by allowing this kind of personal exposure without adequately informing users.

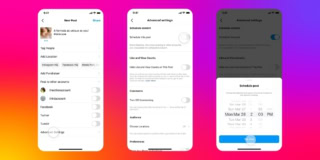

Meta has said that any media captured through its glasses remains on the user’s device unless the user chooses to share it, and that human contractor review applies only to content that users actively upload. The company also notes that its Meta AI terms of service include language permitting human review of AI interactions.

Users who invoke the AI assistant to analyse something in their environment are effectively sharing that footage with Meta’s systems, a step many may not recognise as constituting a “choice to upload.” The terms of service language permitting human review is present but is unlikely to register as meaningful informed consent for users who do not read service agreements in detail, which is to say, most users.

The gap between what Meta discloses and what users understand they are consenting to is precisely what regulators and plaintiffs are now examining.

The timing could not have been any less awkward for Meta. The company has invested heavily in the Ray-Ban smart glasses as the leading consumer entry point into wearable AI, with sales exceeding seven million pairs in 2025. The product is also the foundation on which Meta intends to eventually build facial recognition capabilities, a feature the Swedish report noted is already under internal development.

A company that cannot reliably communicate to users that their intimate environments may be reviewed by human contractors in another country is also a company that will face an extremely difficult public reception when it announces that its glasses can identify strangers’ faces on demand.

Meta’s history on data protection issues has not inspired confidence. The company has faced fines, investigations, and consent orders across multiple jurisdictions over the past decade, and each new episode narrows the goodwill available to it when it argues that its intentions are sound even if its disclosures fell short.

Whether investigations in the UK and a US lawsuit will result in meaningful changes to how Meta operates its human review pipeline for the glasses remains to be seen. What is already clear is that the company will need to provide a far more visible and comprehensible account of its data practices to users before its smart glasses ambitions can scale without a sustained regulatory headwind.