Researchers at Xidian University in China have demonstrated a light-powered computing chip capable of running reinforcement learning tasks entirely within the optical domain, a breakthrough that eliminates a fundamental limitation that has held back light-based AI hardware for over a decade.

The findings, published in the journal Optica, point toward a future where autonomous vehicles and robots learn directly from their environments using chips that are dramatically faster and more energy-efficient than today’s electronic processors.

Photonic spiking neural systems have long been considered a promising path toward AI hardware that outpaces conventional electronics. These systems mimic how biological neurons communicate by using rapid pulses of light rather than electrical signals, which travel faster and consume far less energy. The problem has always been in the learning step.

“Photonic spiking neural systems use brief optical pulses, or spikes, to emulate neural signaling, but they can typically only process the linear parts of computation using light,” said research team leader Shuiying Xiang from Xidian University.

Every previous attempt to build such systems ran into the same wall: the nonlinear operations that actually make learning and decision-making possible required converting signals back into electrical form for processing.

“Previously, the nonlinear steps that make learning and decision making possible required the signal to be converted back into electronic signals. This adds delay and undercuts the speed and energy advantages of photonics,” Xiang explained.

The new design removes that conversion step entirely, keeping all computation, both linear and nonlinear, inside the optical domain.

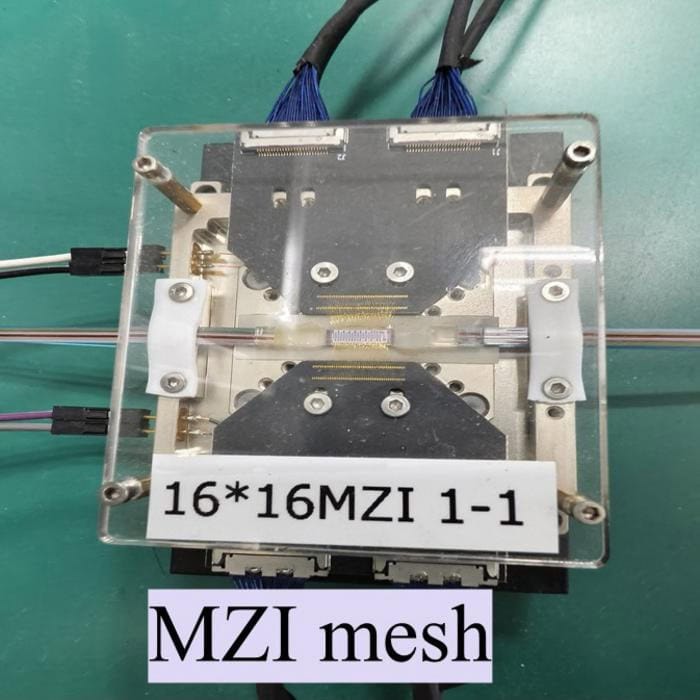

The researchers designed a programmable photonic neuromorphic platform built from two chips working in tandem. The first is a 16-channel photonic neuromorphic processor containing 272 trainable parameters, capable of handling multiple optical signals simultaneously. The second features a distributed feedback laser array with a saturable absorber component that enables low-threshold nonlinear optical spiking, which is the key element that allows learning to happen without electronics.

To test the system’s practical capability, the team used reinforcement learning, the same AI training approach that underlies many modern robotic and autonomous systems, where a machine learns through repeated trial and error rather than labelled training data.

“We used this system to demonstrate reinforcement learning, supported by a hardware and software collaborative framework that trains and runs the neural network. The system was able to learn quickly through trial and error, showing potential as a fast, low-latency solution that could be used for applications such as autonomous driving and embodied intelligence,” Xiang said.

The results were tested against two standard control benchmarks. One required balancing a pole on a moving cart, the classic CartPole task used widely to evaluate reinforcement learning systems. The other required stabilising an inverted pendulum. Hardware decisions closely matched the software model in both tests, with accuracy dropping only 1.5% on CartPole and 2% on the pendulum challenge.

On raw computing metrics, the photonic linear processing reached 1.39 tera operations per second per watt. Nonlinear computation achieved nearly 988 giga operations per second per watt. On-chip computing latency measured just 320 picoseconds, a speed advantage that conventional electronic processors cannot approach.

The current prototype operates with 16 optical channels. The team has announced plans to scale the architecture to a 128-channel photonic spiking neural chip, which would support more complex reinforcement learning tasks closer to real-world deployment conditions. The researchers also aim to build compact hybrid photonic systems suitable for edge computing, where low power consumption and fast local processing are both critical requirements.

If the architecture scales successfully, photonic AI hardware could offer a credible alternative to electronic GPU clusters for the class of applications, including robotics, autonomous vehicles, and real-time environmental adaptation, where latency and energy consumption are the binding constraints on what machines can do.

You can read the research here.