Instagram is rolling out a new safety feature aimed at parents of teenage users. The company will now alert parents if their child repeatedly searches for terms related to suicide or self-harm within a short period. The move adds another layer to Instagram’s existing teen safety controls and reflects growing pressure on social platforms to act earlier when warning signs appear.

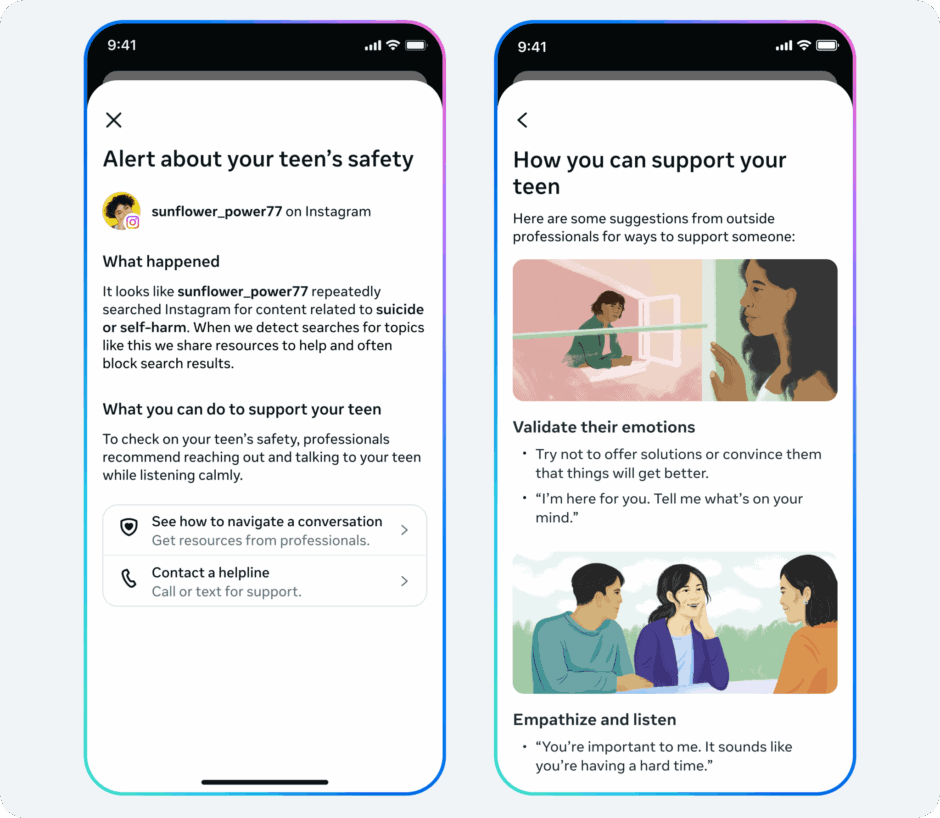

Under the new system, parents who use Instagram’s supervision tools will receive a notification when this search pattern crosses a set threshold. From there, they can access optional resources designed to help them start a conversation with their teen about mental health and online behavior. The alerts will begin rolling out next week in the United States, the United Kingdom, Australia, and Canada. Instagram plans to expand the feature to more regions later.

In a blog post, Instagram said it intentionally set the bar at several searches within a short time frame. The company admitted that this approach could sometimes trigger alerts even when there is no serious issue. However, it said the decision reflects caution. According to Instagram, experts support starting carefully and adjusting over time based on feedback.

The company also stressed that teens already face limits around this type of content. Search results tied to suicide and self-harm are blocked for younger teen users. In addition, Instagram does not show content related to these topics to teens under its current policies.

Looking ahead, Instagram confirmed it is developing a similar alert system tied to its AI tools. However, the company did not share details. More information is expected later this year.