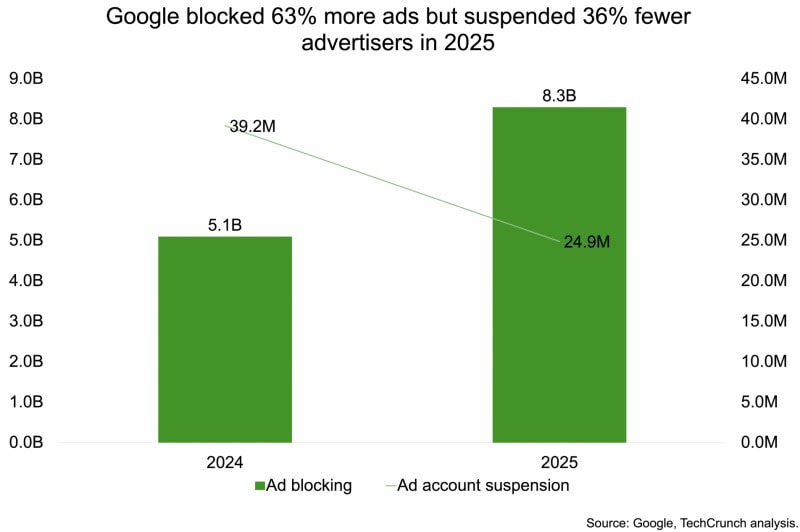

Google blocked a record 8.3 billion ads globally in 2025, up from 5.1 billion the previous year, while suspending far fewer advertiser accounts as the company transitions toward AI-driven enforcement targeting individual ads rather than banning entire accounts.

The search giant attributed the disparity to growing use of artificial intelligence, particularly its Gemini models, which Google says allow it to detect and block policy-violating ads earlier and with greater precision. AI-driven systems caught more than 99% of policy-violating ads last year before they were shown to users, according to the company.

The findings come from Google’s 2025 Ads Safety Report and reflect a broader change in enforcement strategy. While more problematic ads are being stopped, fewer advertiser accounts are being suspended, indicating a transition from banning bad actors outright to blocking individual ads on a case-by-case basis.

Google said the rise in blocked ads also reflects growing use of generative AI by scammers to produce deceptive content at scale, with its Gemini models helping detect patterns across large campaigns and block them earlier in the advertising pipeline.

The shift mirrors a wider push by Google to integrate its Gemini models more deeply into core products and infrastructure, including advertising. The company is increasingly using AI to automate campaign creation, detect policy violations, and respond to emerging threats in real time.

Among the blocked ads and suspended accounts, 602 million ads and 4 million advertiser accounts were linked to scams. The company removed over 1.7 billion ads and suspended 3.3 million advertiser accounts in the United States in 2025, with ad network abuse, misrepresentation, and sexual content among the most common violations.In India, Google’s largest market by users, the company blocked 483.7 million ads, nearly double the previous year, even as account suspensions fell to 1.7 million from 2.9 million.

Trademarks, financial services, and copyright issues were among the top violations in the Indian market.Keerat Sharma, vice president and general manager of ads privacy and safety at Google, told reporters during a virtual briefing that the company has shifted toward more targeted, AI-driven enforcement at a much more granular level on a creative level, as opposed to using a much more blunt instrument like advertiser suspensions. He added that the approach has reduced incorrect suspensions by 80% year over year.

Google’s layered defenses, including advertiser verification requiring businesses to confirm their identity before running ads, are designed to prevent bad actors from creating accounts in the first place. Sharma said this has contributed to the decline in suspensions alongside the more precise AI detection systems.

The advertiser verification process represents a preventive measure that stops potentially problematic advertisers before they can launch campaigns. This front-end filtering reduces the need for account suspensions later in the enforcement pipeline while maintaining platform safety standards.

The numbers are likely to fluctuate over time as Google rolls out new defenses and bad actors adapt their tactics, according to Sharma. The company aims to stop harmful ads as early in the pipeline as possible, ideally before campaigns are created or submitted for review.The enforcement shift raises questions about balancing precision with effectiveness. While blocking individual ads may reduce collateral damage to legitimate advertisers who occasionally violate policies, critics may question whether the approach allows repeat offenders to continue operating with fewer consequences.

Google’s AI systems analyze multiple signals to identify policy violations, including ad content, landing pages, advertiser behavior patterns, and user reports. The Gemini models can process these signals at scale and identify subtle patterns that might indicate coordinated scam operations or policy evasion tactics.

The increase in blocked ads despite fewer account suspensions suggests Google’s systems are catching more policy violations per advertiser. This could indicate either that scammers are testing multiple ad variations to evade detection, or that AI systems are identifying marginal violations that might previously have been overlooked.

The company’s approach reflects broader industry trends in content moderation, where platforms increasingly use AI to make enforcement decisions at scale. However, concerns persist about algorithmic accuracy, appeals processes, and whether automated systems can handle nuanced policy judgments.

Generative AI has created new challenges for advertising platforms as scammers leverage the technology to produce convincing fake content, phishing pages, and deceptive claims at unprecedented scale. Google’s countermeasure involves using its own AI models to detect patterns indicative of AI-generated scam content.

The arms race between platform enforcement and scammer innovation continues escalating, with both sides deploying increasingly sophisticated AI tools. Google’s reliance on Gemini models represents a bet that its AI capabilities can stay ahead of adversaries using similar technologies.