Google and Jigsaw, an incubator at Google, have released “Perspective API” to help identify toxic comments using machine learning.

Jigsaw, an incubator within Alphabet, builds the technology to fight global security challenges such as online harassment, digital attacks, abuse, violent extremism etc. The blog reads,

“Imagine trying to have a conversation with your friends about the news you read this morning, but every time you said something, someone shouted in your face, called you a nasty name or accused you of some awful crime. You’d probably leave the conversation. Unfortunately, this happens all too frequently online as people try to discuss ideas on their favorite news sites but instead get bombarded with toxic comments.”

Cyberbullying is one of the negative aspects of the digital age. According to statistics, 72% of US internet users have witnessed harassment online. This stops many people from engaging in a healthy conversation on the internet especially on social media. Many developers and publishers want to encourage discussion around their content but sifting through a lot of comments and identifying the abusive ones take a lot of time and energy.

What is Perspective API?

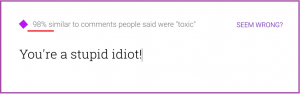

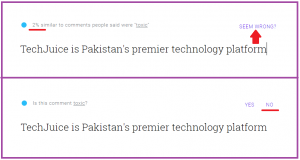

Perspective API (Application Programming Interface) uses machine learning technology to host better conversations online. It reviews comments and scores them based on how similar they are to comments people said were “toxic” or abusive. Perspective experimented with Wikipedia, New York Times, Economist and Guardian to collect the data.

It will be up to the publishers to decide what they want to do with the information provided by Perspective. For example, Publisher can flag comments for the moderators to review and decide whether to include them in a conversation or not. The publisher also can provide tools to help the people see the potential toxicity of their comment as they write it.

We tried the Perspective and below are the results. The output is not 100 percent correct as the API is still in the developing phase. You can also try it here.

What’s next?

The API is complex and is still developing. Perspective will improve over time and develop a better understanding of the toxic comments. The newer models in future will work in languages other than English as well as models that can identify other perspectives, such as when comments are off-topic

India was responsible for 80% Ransomware attacks in Pakistan in 2016

India was responsible for 80% Ransomware attacks in Pakistan in 2016