A team at Pointcloud GmbH in Zürich has developed a 4D imaging sensor small enough to fit on a single silicon chip, combining the ability to map three-dimensional surroundings with real-time speed measurement of moving objects.

The research represents a significant step toward giving machines the kind of instant, comprehensive spatial awareness they currently lack.

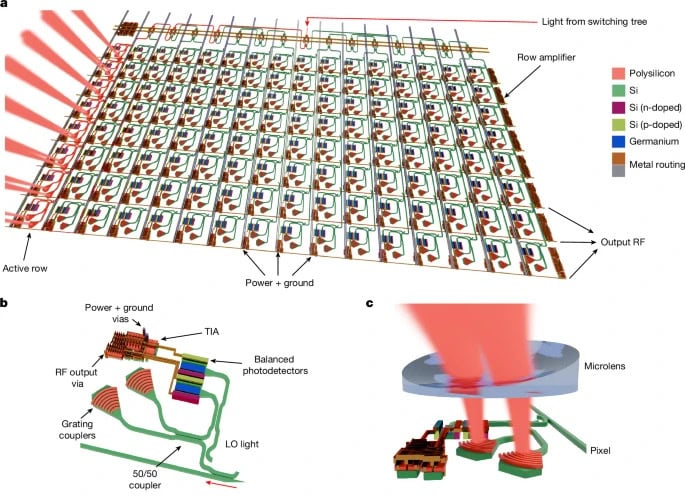

The chip uses a focal plane array of 61,952 stationary pixels, each acting as a tiny sensor that emits laser light toward a scene and detects the reflected signal. Laser light from an external source is fed into the chip and routed through a network of optical switches that direct it to groups of pixels in sequence. The system uses a technique called frequency-modulated continuous wave (FMCW) LiDAR, which sends a continuous beam rather than the pulsed bursts used by conventional LiDAR systems.

The difference matters. Most drones and robots today rely on pulsed LiDAR, which fires bursts of light and waits for them to bounce back. It works, but it is bulky and velocity-blind. To determine how fast an object is moving, the system has to compare two separate frames and calculate the difference. The new chip eliminates that extra step by measuring distance and velocity in a single reading, adding what researchers describe as a fourth dimension to conventional 3D spatial data.

The sensor has demonstrated effective performance at ranges up to 65 meters, and integrates over half a million microscopic components onto a single piece of silicon.

“This result demonstrates the capabilities of FMCW LiDAR FPA sensors as enablers of ubiquitous, low-cost, compact coherent 4D imaging cameras,” the researchers wrote in the study paper.

The improvement is increasingly important as robots move beyond controlled factory environments into warehouses, public spaces, and autonomous systems where objects constantly change position and speed. By integrating distance and motion sensing onto a single chip, the design reduces system complexity, simplifies hardware architectures, and lowers power consumption compared to conventional multi-sensor setups.

While the team plans to further refine range and resolution, the technology’s potential extends beyond robotics. It could eventually lead to high-performance 4D imaging in standard consumer smartphone cameras.

The research aligns with a broader industry push toward more capable machine perception. Companies like Aeva Technologies are already commercializing FMCW-based 4D LiDAR for automotive and industrial use, and manufacturers of autonomous mobile robots are increasingly adopting sensor fusion approaches that combine vision, LiDAR, and inertial measurement. A compact, low-cost chip that handles both spatial mapping and velocity tracking in one package could accelerate that trend significantly.

The findings were published in the journal Nature.