On March 21, 2006, at 12:50 PM Pacific Standard Time, a 29-year-old software engineer named Jack Dorsey typed seven words into a bare-bones web interface and pressed “update.”

The message read:

That single, lowercase, vowel-stripped sentence is now recognised as the first tweet ever sent. It was posted from a cramped office in San Francisco’s South Park neighbourhood, inside a company that had no public name, no users outside the building, and almost no money. No one in the room that afternoon, let alone anyone outside it, could have predicted that this throwaway status update would go on to reshape how the world communicates, argues, organises, and consumes information for the next twenty years.

The idea had been taking shape for months as Dorsey was fixated on the immediacy of SMS and the raw, unfiltered quality of status updates on early blogging platforms. He wanted something even more stripped back: a way for anyone to broadcast what they were doing right now, compressed into 140 characters, a limit chosen because it fit neatly inside a single SMS message. The working name was “twttr,” its missing vowels borrowed from the naming conventions of the era (Flickr being the obvious influence) and its sound evoking the chirp of birds.

The team building it was remarkably small. Dorsey worked alongside Evan Williams, who had recently sold Blogger to Google; Biz Stone, a creative director with a background in blogging tools; and a handful of engineers. They were all employed by Odeo, a struggling podcasting startup, and what would become Twitter was, in those early weeks, little more than a side project built on borrowed time. On that March afternoon, the prototype was so rudimentary that Dorsey had to manually refresh the page to confirm his own message had appeared. There were no likes, no retweets, no replies, no hashtags. Just a single public timeline scrolling downward.

Twitter, the name officially adopted later that year, was conceived not as a conversation platform but as a broadcasting tool. Dorsey envisioned it as a real-time pulse of humanity, built around one deceptively simple prompt: “What are you doing right now?” That question, and the constraint it imposed, would go on to power citizen journalism during the Arab Spring, real-time election monitoring across dozens of countries, global movements like #MeToo and #BlackLivesMatter, and the explosive, often ungovernable spread of both breaking news and misinformation.

Growth in the early months was glacial. By the end of 2006, Twitter had only a few thousand users, nearly all of them tech insiders in San Francisco and New York. The turning point came at the 2007 South by Southwest conference, where the company mounted large screens in the hallways displaying a live feed of tweets. Attendees became instantly hooked. Sign-ups surged. The servers buckled. The “fail whale,” the cartoon error image that appeared when the site crashed, became one of the most recognisable symbols of the early social web.

For all its cultural power, Twitter never fully figured out how to turn influence into a sustainable business. The company went public in 2013 at a valuation of over $14 billion, but spent the following decade struggling with stagnant user growth, inconsistent leadership, and an advertising model that consistently underperformed against Facebook and Google.

By the time Elon Musk completed his $44 billion acquisition in October 2022, the platform was already fragile. What followed accelerated what many experts argue the so-called death of Twitter.

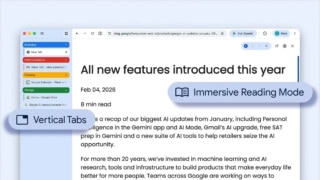

Musk gutted the workforce, slashing roughly 80% of staff in a matter of months. He dismantled the trust and safety team, reversed permanent bans on high-profile accounts, and replaced the legacy verification system with a paid blue-check subscription that eroded the credibility the badge had once carried. The company was renamed X in mid-2023, a rebrand that alienated users and advertisers alike. As a result, major brands pulled spending. Journalists and public figures migrated to Threads, Bluesky, and Mastodon.

Internal infrastructure degraded as institutional knowledge walked out the door. By 2025, X’s advertising revenue had fallen to a fraction of what Twitter once generated, and the platform’s role as the world’s real-time public square had been fractured beyond recognition. What Dorsey built as an open, chaotic, but fundamentally democratic broadcast network had been reshaped into something closer to a personal megaphone for its owner, a cautionary tale about what happens when a platform’s identity becomes inseparable from the politics of the person who controls it.

Viewed from 2026, that first tweet feels almost impossibly quaint. The platform it spawned has since been renamed X, overhauled with algorithmic feeds, layered with blue-check monetisation and Grok AI integration, and transformed into a theatre of political combat that Dorsey never intended. But the DNA of that original design, the 140-character constraint, the public timeline, the idea that anyone could broadcast to anyone, remains embedded in the architecture. Dorsey himself has said in interviews that he never envisioned the platform becoming a global town square. He simply wanted a better version of the status message he used on AOL Instant Messenger.

The first tweet marked the beginning of the micro-blogging era, a shift in how people relate to information and to each other online. It laid the conceptual groundwork for Instagram Stories, TikTok’s real-time intimacy, and the always-on digital presence that billions of people now treat as normal. It also planted the seeds of the attention economy, the system in which outrage consistently travels faster than nuance, virality became a business model, and the line between information and performance dissolved almost entirely.

Twenty years on, that seven-word message endures as a quiet monument to an uncomfortable truth about technology: the innovations that change the world most profoundly rarely announce themselves. They begin with someone typing a few unremarkable words into a half-finished interface, not knowing what comes next.