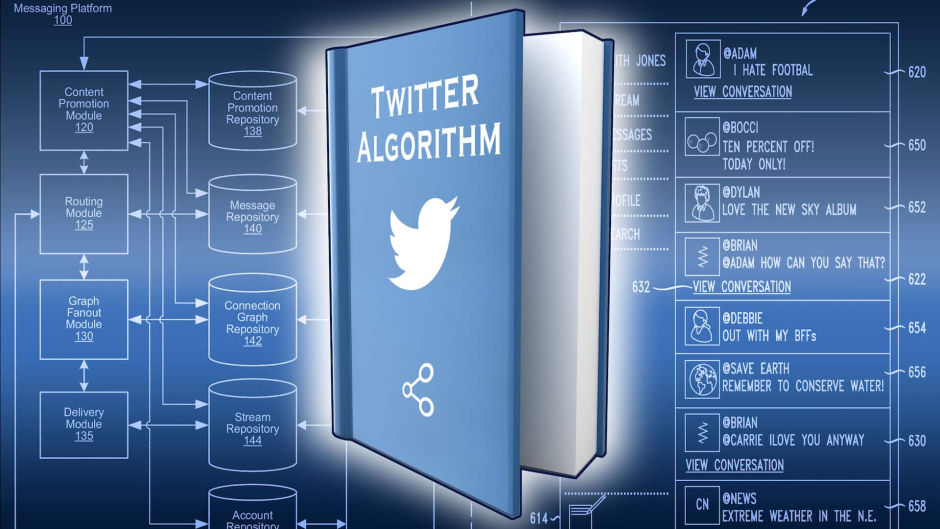

Twitter CEO Elon Musk says that the social media platform’s algorithm is finally going open source, and it’s happening “next week.” The idea of making Twitter’s algorithm open source isn’t new – Musk had been pushing for Twitter’s code to be opened up since before he purchased the company for $44 billion last October.

Prepare to be disappointed at first when our algorithm is made open source next week, but it will improve rapidly!

— Elon Musk (@elonmusk) February 21, 2023

Twitter algorithm should be open source

— Elon Musk (@elonmusk) March 24, 2022

“I’m worried about de facto bias in “the Twitter algorithm” having a major effect on public discourse. How do we know what’s really happening? Open source is the way to go to solve both trust and efficacy,” Musk said in another tweet.”

According to the US policy think tank the Brookings Institution, opening machine learning algorithms to the wider developer community can speed adoption and advance science by minimizing the time researchers need to build their own software tools.

Brookings said OSS algorithms could also be a boon for tech sector competition, could help define AI standards and, as Musk implied, help fight algorithmic bias by inviting others to examine the code and run it through algorithm interrogation software.

Twitter’s Community Notes team said yesterday that it added a new capability to the fact-checking feature: Notifications of new notes on things that users previously interacted with.

“Starting today, you’ll get a heads up if a Community Note starts showing on a Tweet you’ve replied to, Liked, or Retweeted. This helps give people extra context that they might otherwise miss.”

Community Notes enable Twitter users flagged as “contributors” (signing up is free, at least for now) to leave notes on a tweet providing more context. Other contributors can vote on a note as helpful or not, and once a certain threshold of positive ratings is reached the note becomes publicly shown on the tweet.

Qualifications for becoming a contributor are minimal: you need to have a phone number on your account, no recent Twitter violations, have been on the platform for a certain amount of time, etc. Twitter does claim to review all applications and says it rates notes based on contributions of people with different points of view, which seems to be determined algorithmically.

The US Supreme Court heard oral arguments in Gonzales et al. v. Google yesterday, and the outcome could reshape how the internet functions.

At issue is Section 230 of the Communications Decency Act, which states that service providers hosting third-party content can’t be held liable for whatever its users upload. A particular issue in the case heard yesterday was whether that section of the CDA immunizes companies like Google when they make targeted recommendations.

As we noted in our coverage of the Google case, legal experts told us that the Supreme Court will unlikely rule against Google.

Obviously, it and other tech industry giants don’t want to lose this case, as it could mean they’re held liable for the behavior of their algorithms. Its likely Musk doesn’t want to face that kind of liability at Twitter either, given its current financial situation.

The Twitter case is trying to determine whether a company that provides generic services to users and regularly works to prevent terrorists from using those services, knowingly provided meaningful assistance to terrorists “because it allegedly could have taken more ‘meaningful’ or ‘aggressive’ action to prevent such use,” lawyers argue in the docket.

The plaintiffs, relatives of a 2017 ISIS bombing victim, argue that Twitter did little to stop the spread of videos produced by ISIS, and are therefore liable for aiding and abetting terrorism.

Read More: