Anthropic inadvertently exposed the full source code of its popular AI coding tool Claude Code after a debugging file was accidentally included in a routine software update. The leak revealed roughly 500,000 lines of code across nearly 2,000 files, including unreleased features and the complete architecture of the tool’s agentic harness. It is Anthropic’s second major security lapse in a single week. The company that markets itself as the safety-first AI lab just shipped its own source code to the public.

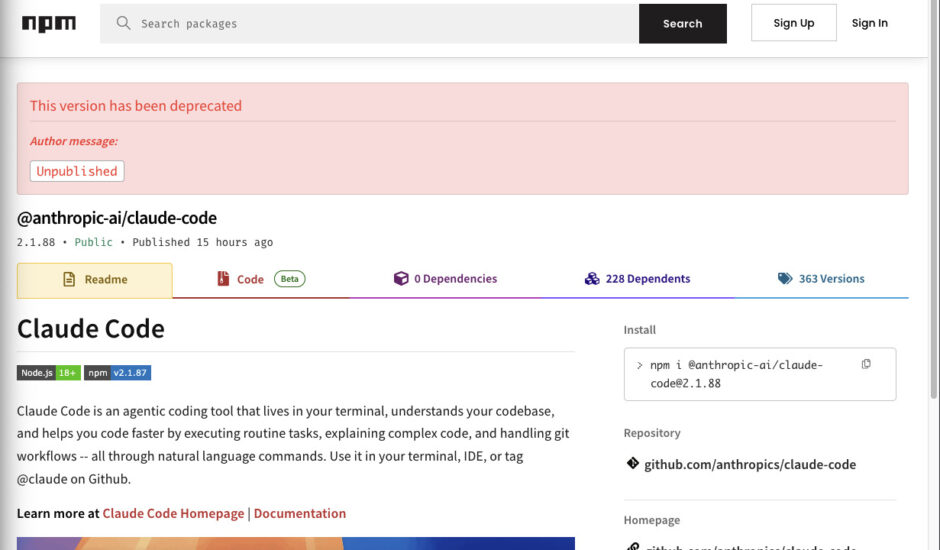

On March 31, security researcher Chaofan Shou discovered that Anthropic had accidentally bundled a source map file inside version 2.1.88 of its Claude Code npm package. The file pointed directly to a zip archive on Anthropic’s own cloud storage containing the full, unobfuscated TypeScript source code. Within hours, the codebase was mirrored on GitHub and forked more than 41,500 times, ensuring it is now permanently in the wild.

Claude code source code has been leaked via a map file in their npm registry!

Code: https://t.co/jBiMoOzt8G pic.twitter.com/rYo5hbvEj8

— Chaofan Shou (@Fried_rice) March 31, 2026

Anthropic confirmed the leak, calling it a packaging error caused by human error rather than a security breach. The company said no customer data or credentials were exposed. However, they clarifies that what leaked was not the Claude AI model itself but the entire software harness that makes it function as a coding agent.

This includes tool orchestration logic, permission enforcement, memory architecture, and multi-agent coordination. Claude Code has seen explosive adoption, with annualized revenue reaching an estimate of $2.5 billion as of February. Anthropic has not confirmed this number.

Developers found 44 hidden feature flags for unshipped capabilities, including an autonomous background mode called “KAIROS” that would let Claude Code work while users are idle. References to a new model known internally as “Mythos” and “Capybara” were also discovered.

Just days earlier, Anthropic had accidentally left nearly 3,000 files publicly accessible through a misconfigured content management system, including details about unreleased models. Two major exposures in one week raises serious questions about operational security at a company that has built its entire brand around responsible AI development.

The question is whether the competitors or nefarious agents will take this the leak as amounts to a free blueprint for building a production-grade AI coding agent. We are already seeing some efforts being made in this direction. Many GitHub users now advertise their own build of Claude Code, but that does not mean they can get away with it. There is likely risking a legal action.

Justin Schroeder, the creator of dmux, ArrowJS, FormKit, AutoAnimate, Tempo, zodown, took to X to express the following:

“Just because the source is now ‘available’ DOES NOT MEAN IT IS OPEN SOURCE. You are violating a license if you copy or redistribute the source code, or use their prompts in your next project! Don’t do that… Keep in mind that even though this is the first time we’ve gotten a proper full-source dump, it has never been impossible to read Claude Code’s prompting since it was part of the actual distributed package — that’s surprising.”

For Anthropic, it is a crucial reminder that protecting intellectual property requires the same rigor it asks its enterprise customers to maintain.