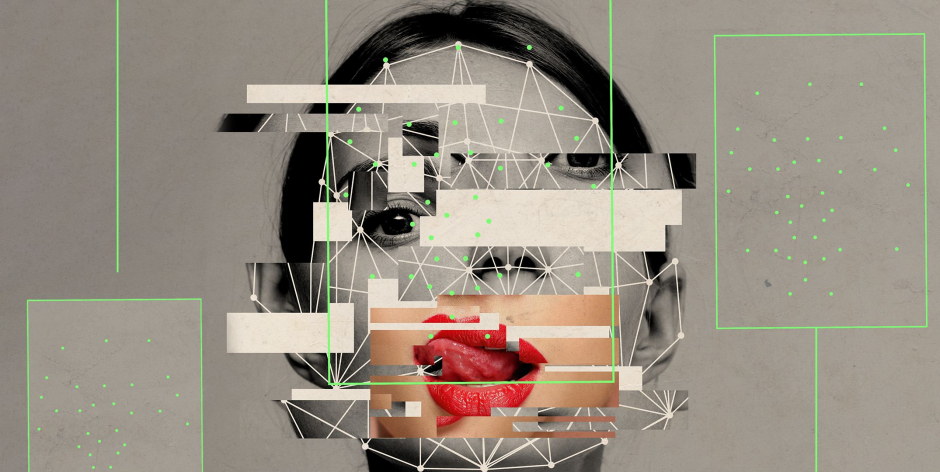

Making realistic AI porn took computer expertise but until recently since the release of numerous AI software that is really user-friendly, pretty much anyone can make deepfake porn with little computer and AI knowledge. Incidents of harassment and extortion are likely to rise, abuse experts say, as bad actors use AI models to humiliate targets ranging from celebrities to ex-girlfriends and even children.

Deepfakes are videos and images that have been digitally created or altered with artificial intelligence or machine learning. Porn created using the technology first began spreading across the internet several years ago when a Reddit user shared clips that placed the faces of female celebrities on the shoulders of porn actors.

Deepfake creators have disseminated similar videos and images targeting online influencers, journalists, and others with a public profile. Thousands of videos exist across a plethora of websites. And some have been offering users the opportunity to create their own images — essentially allowing anyone to turn whoever they wish into sexual fantasies without their consent, or use the technology to harm former partners.

The problem, experts say, grew as it became easier to make sophisticated and visually compelling deepfakes. And they say it could get worse with the development of generative AI tools that are trained on billions of images from the internet and spit out novel content using existing data.

Adam Dodge, the founder of EndTAB, a group that provides training on technology-enabled abuse:

“The reality is that the technology will continue to proliferate, will continue to develop, and will continue to become sort of as easy as pushing the button. And as long as that happens, people will undoubtedly … continue to misuse that technology to harm others, primarily through online sexual violence, deepfake pornography, and fake nude images.”

OpenAI says it removed explicit content from data used to train the image-generating tool DALL-E, which limits the ability of users to create those types of images. The company also filters requests and says it blocks users from creating AI images of celebrities and prominent politicians. Midjourney, another model, blocks the use of certain keywords and encourages users to flag problematic images to moderators.

Meanwhile, the startup Stability AI rolled out an update in November that removes the ability to create explicit images using its image generator Stable Diffusion. Those changes came following reports that some users were creating celebrity-inspired nude pictures using the technology

Stability AI spokesperson Motez Bishara said the filter uses a combination of keywords and other techniques like image recognition to detect nudity and returns a blurred image. But it’s possible for users to manipulate the software and generate what they want since the company releases its code to the public. Bishara said Stability AI’s license “extends to third-party applications built on Stable Diffusion” and strictly prohibits “any misuse for illegal or immoral purposes.”

Some social media companies have also been tightening up their rules to better protect their platforms against harmful materials.

TikTok said last month that all deepfakes or manipulated content that show realistic scenes must be labeled to indicate they’re fake or altered in some way and that deepfakes of private figures and young people are no longer allowed. Previously, the company had barred sexually explicit content and deepfakes that mislead viewers about real-world events and cause harm.

The gaming platform Twitch also recently updated its policies around explicit deepfake images after a popular streamer named Atrioc was discovered to have a deepfake porn website open on his browser during a live stream in late January. The site featured phony images of fellow Twitch streamers.

Twitch already prohibited explicit deepfakes, but now showing a glimpse of such content — even if it’s intended to express outrage — “will be removed and will result in an enforcement,” the company wrote in a blog post. And intentionally promoting, creating, or sharing the material is grounds for an instant ban.

Other companies have also tried to ban deepfakes from their platforms, but keeping them off requires diligence.

The same app removed by Google and Apple had run ads on Meta’s platform, which includes Facebook, Instagram, and Messenger. Meta spokesperson Dani Lever said in a statement the company’s policy restricts both AI-generated and non-AI adult content and it has restricted the app’s page from advertising on its platforms.

In February, Meta, as well as adult sites like OnlyFans and Pornhub, began participating in an online tool, called Take It Down, which allows teens to report explicit images and videos of themselves from the internet. The reporting site works for regular images and AI-generated content — which has become a growing concern for child safety groups.

“When people ask our senior leadership what are the boulders coming down the hill that we’re worried about? The first is end-to-end encryption and what that means for child protection. And then second is AI and specifically deepfakes,” said Gavin Portnoy, a spokesperson for the National Center for Missing and Exploited Children, which operates the Take It Down tool.

Read More: