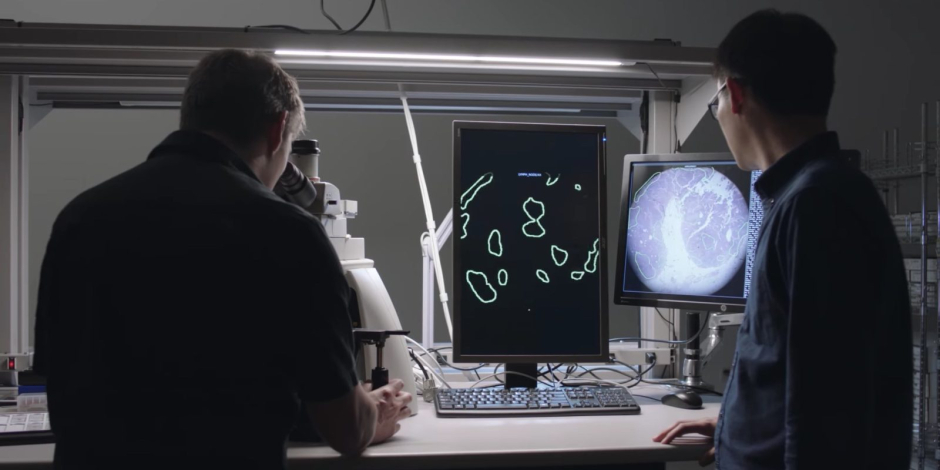

Google’s Artificial Intelligence research division made an augmented reality microscope for cancer detection. The special AR microscopes (ARM) use machine learning to detect cancerous cells.

A cancer diagnosis has always been a time-consuming process. After taking a biopsy of a suspected cancerous mass, a pathologist has to examine the cells under a microscope. Google has shown in the past that a neural network can accelerate diagnosis of cancer in digital images, but most pathologists are using compound light microscopes to examine slides. The solution was the Augmented Reality Microscope. With this system, Google’s AI sees an image in the microscope in real time along with the doctor. Then, it shows analysis on top of that image.

Previously, Google has used neural networks to detect breast cancer, with accuracy rates comparable to a trained pathologist. At the moment, a cancer diagnosis is primarily achieved with compound light microscopes. However, newer deep understanding of the cancerous cells requires a digital representation of microscopic tissue.

AI head Jeff Dean said:

“Google’s augmented reality microscope (ARM) combines both methods, it blend[s] the expertise of automated machine learning systems with human expertise.”

Martin Stumpe and Craig Mermel of Google Brain Team said:

“In principle, the ARM can provide a wide variety of visual feedback, including text, arrows, contours, heatmaps or animations, and is capable of running many types of machine learning algorithms aimed at solving different problems such as object detection, quantification or classification.”

Google said that:

“ARM has the potential for a large impact on global health, particularly for the diagnosis of infectious diseases, including tuberculosis and malaria, in developing countries.”

It can be used in conjunction with existing digital pathology workflows, while it can be expanded to other industries like healthcare, life sciences research, and material science.